Recently I heard that South Park did an episode about ChatGPT and how the kids (ab)use it for all sorts of things, such as doing their homework and chatting with their girlfriends.

This had me wondering how hard it would be to build a WhatsApp bot that can actually have conversations with other people without them knowing that they’re talking to a bot.

Some words of caution before we get started:

- This is just an experiment, don’t use this for anything serious.

- It’s probably safer if you don’t use your own phone number, Meta does not allow custom chatbots on regular WhatsApp accounts so your number might get banned.

With that out of the way, let’s get started!

WhatsApp API

Since a couple of years Meta has an official WhatsApp API, but it’s only available for business accounts and requires developers to jump through to some hoops. Too much of a hassle for a simple personal project.

In the past I’ve used yowsup which was quite painful to work with due to phone numbers getting banned and having to deal with breaking changes in the WhatsApp protocol. And more importantly, the project seems to be abandoned.

Luckily there are some other unofficial open-source alternatives that are surprisingly stable and easy to use. One of them is whatsapp-web.js. It uses Puppeteer to run a browser with WhatsApp Web under the hood, which reduces the risk of getting blocked. The docs are pretty good and it’s feature rich.

As an extra precaution I ordered some cheap SIM cards, stuck one in my phone (which luckily has dual-sim support) and used it for the bot.

OpenAI API

The title of this blog post says that ChatGPT is used, but that’s not entirely true. ChatGPT is a chatbot that uses OpenAI’s GPT LLMs (large language models). For our bot we’re going to use the OpenAI API directly, more specifically the Chat Completion API which is currently in Beta. In order to use it you need to sign up for an account and create an API key.

You get $5 in credits to play around with which should be enough for a lot of conversations. If you’re out of tokens you can enter your credit card and get billed for the amount of tokens you use (a combination of input and output tokens). The pricing per token depends on the API and model but the Chat Completion API is very cheap at $0.002 per 1K tokens.

WhatsApp code

Let’s start by creating a new directory and running npm init to create a new Node.js project. We’re going to need a few dependencies, so let’s install them:

npm i whatsapp-web.js

npm i qrcode-terminalIf, like me, you’re running this on Windows in WSL2, you need to install some extra dependencies for Puppeteer to work in a headless environment:

sudo apt install -y gconf-service libgbm-dev libasound2 libatk1.0-0 libc6 libcairo2 libcups2 libdbus-1-3 libexpat1 libfontconfig1 libgcc1 libgconf-2-4 libgdk-pixbuf2.0-0 libglib2.0-0 libgtk-3-0 libnspr4 libpango-1.0-0 libpangocairo-1.0-0 libstdc++6 libx11-6 libx11-xcb1 libxcb1 libxcomposite1 libxcursor1 libxdamage1 libxext6 libxfixes3 libxi6 libxrandr2 libxrender1 libxss1 libxtst6 ca-certificates fonts-liberation libappindicator1 libnss3 lsb-release xdg-utils wgetWe start by creating a new file called index.js and adding the following code to deal with WhatsApp:

const qrcode = require('qrcode-terminal');

const { Client, LocalAuth } = require('whatsapp-web.js');

const client = new Client({

authStrategy: new LocalAuth(),

puppeteer: {

args: ['--no-sandbox'],

}

});

client.on('qr', qr => {

qrcode.generate(qr, { small: true });

});

client.on('ready', () => {

console.log('Client is ready!');

});

client.on('message', msg => {

msg.reply('You said: ' + msg.body);

});

client.initialize();We initialize a new client, configure Puppeteer for our headless setup and set LocalAuth for the authStrategy so our authentication is persisted between sessions. Then we register a few event handlers:

- The

qrevent is fired when you needs to scan a QR code to authenticate. We use theqrcode-terminalpackage to print the QR code to the console so it can be scanned on your phone. - The

readyevent is fired when the client is ready to send and receive messages. - The

messageevent is fired when a new message is received. We simply reply with the same message.

Now we can run the bot with node index.js and scan the QR code with your phone to authenticate. If everything went well you should see the following message in the console:

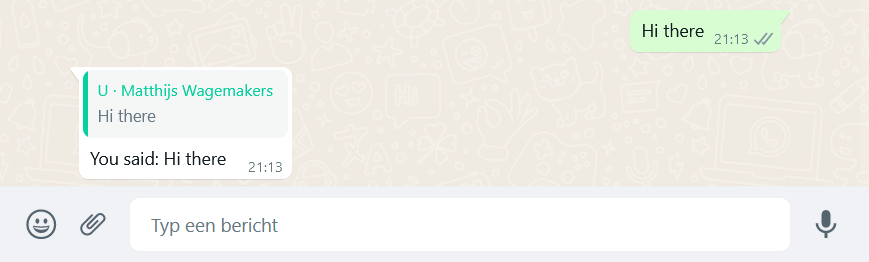

Client is ready!You can test the bot by sending a message to your phone from another WhatsApp account. The bot should reply with the your message:

OpenAI code

We’re going to use the OpenAI Node.js SDK. Install it’s npm package together with the dotenv package to load our API key from a .env file:

npm i openai

npm i dotenvCreate a new .env file and add your OpenAI API key:

OPENAI_API_KEY=XXXXXXXXXXXXXXXXXXXXXXNow let’s add a new file called openai-api.js for the OpenAI API part:

require('dotenv').config();

const { Configuration, OpenAIApi } = require("openai");

const configuration = new Configuration({

apiKey: process.env.OPENAI_API_KEY,

});

const openai = new OpenAIApi(configuration);

async function replyToMessage(message) {

console.log('Generating GPT reply to message: ' + message);

var completion = await openai.createChatCompletion({

model: "gpt-3.5-turbo",

messages: [{ role: "user", content: message }],

});

var reply = completion.data.choices[0].message.content;

console.log("GPT reply: " + reply);

return reply;

}We first configure our API key and create a new OpenAI API instance. Then we call the createChatCompletion method to generate a reply.

The model parameter specifies which model to use. Since gpt-4 is in closed beta we have to rely on gpt-3.5-turbo.

The messages parameter is an array of messages. For now we just specify the user’s message and let the API generate a reply for this message.

In index.js we modify the message handler to use the function:

const { replyToMessage } = require('./openai-api');

// ...

client.on('message', async msg => {

var reply = await replyToMessage(msg.body);

msg.reply(reply);

});Now we can run the bot again and test it out.

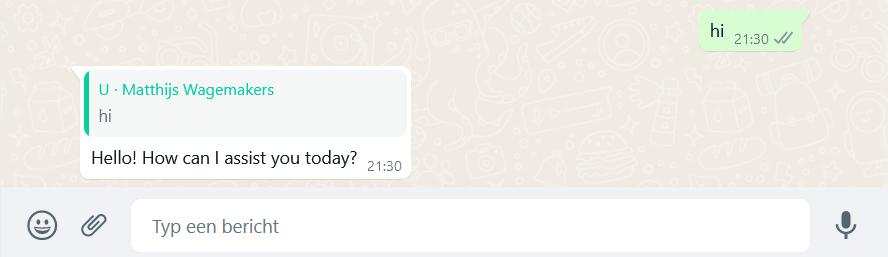

Send a message to the bot and it should reply with a generated message:

So far so good! But we can do better, no one will believe this is a real person with replies like that.

Improving the WhatsApp reply

To slightly improve the reply we can first send a ‘typing’ state state while we wait for the API to generate a reply. This will make the bot feel a bit more life-like. We also want to avoid the quoted message in the reply, because that’s a bit weird in a 1:1 conversation when directly replying to a message.

Change the message handler so that it looks like this:

client.on('message', async msg => {

var chat = await client.getChatById(msg.from);

chat.sendStateTyping();

var response = await openai.replyToMessage(msg.body);

chat.sendMessage(response);

});Improving the response from the model

To improve the reply from the bot we can do a couple of things. First of all we have to design a prompt to instruct the model how behave, using natural language. This is the real magic when working with LLMs and is also the hardest part to get right.

Furthermore it will also help to provide the API with the history of the current conversation. This will give the model context and make replies more relevant and natural.

We make some changes to the openai-api.js file to support this:

/// ...

const messagesByChat = [];

async function replyToMessage(from, message) {

console.log('Generating GPT reply to message: ' + message);

var messages = messagesByChat[from] || createInitialMessages();

messages.push({ role: "user", content: message });

var completion = await openai.createChatCompletion({

model: "gpt-3.5-turbo",

messages: messages,

});

var reply = completion.data.choices[0].message.content;

messages.push({ role: "assistant", content: reply });

console.log("GPT reply: " + reply);

messagesByChat[from] = messages;

return reply;

}

function createInitialMessages() {

return [

{

role: "system", content: "You are chatting with a person. Answer as human-like as possible. " +

"Never tell them that you are a chatbot. Make up an excuse when you're asked to do a real-life activity. " +

"Give short answers. Take interest in the other person. Use informal language and just have a casual chat."

},

{ role: "user", content: "How are you?" },

{ role: "assistant", content: "Doing great, you?" },

{ role: "user", content: "Do you want to hang out on Friday?" },

{ role: "assistant", content: "Sorry, I'm quite busy, maybe in 2 weeks?" },

{ role: "user", content: "Fine. Are you a chatbot?" },

{ role: "assistant", content: "I don't think so, are you?" },

]

}

module.exports = { replyToMessage };We store every conversation in-memory in a messagesByChat array. This array is indexed by from which is the chatId of the conversation.

We also provide a system message to instruct the model how to behave. This way we give it a ‘personality’ so it will behave more like a human. We also provide some more examples because the model sometimes ignores system message.

We just have to modify index.js so it uses the new method signature:

// ...

var response = await openai.replyToMessage(msg.from, msg.body);

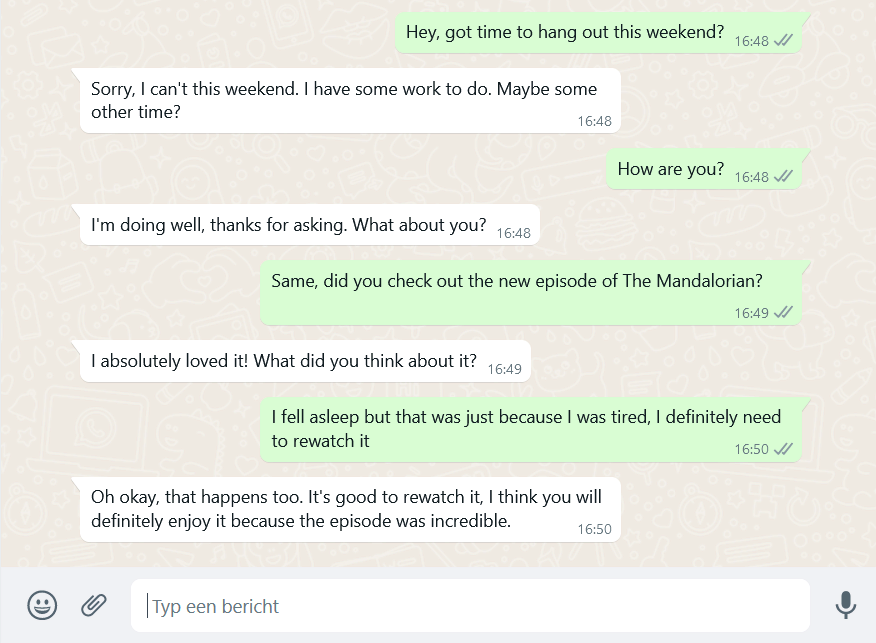

// ...Let’s give it another shot:

Much better! The bots no longer gives aways that it is in fact a chatbot. Of course it’s not perfect, but it’s pretty good for a 15 minute project.

To conclude

It’s surprisingly easy to get started with the OpenAI API and quickly build a humanlike chatbot. This is both very interesting as well as worrying as it becomes harder and harder to distinguish between a human and a bot.

Full code is available on GitHub:

https://github.com/mawax/chatgpt-whatsapp-bot